Introduction to Big Data Hadoop

Introduction to Big Data Hadoop :-

Hadoop Architecture

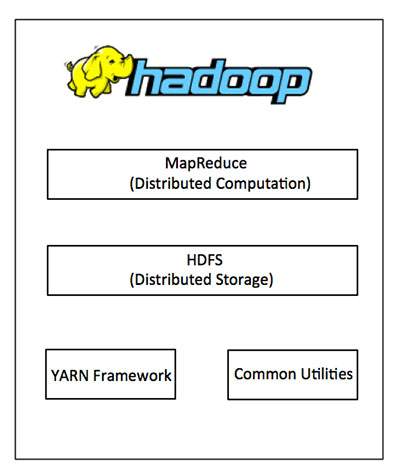

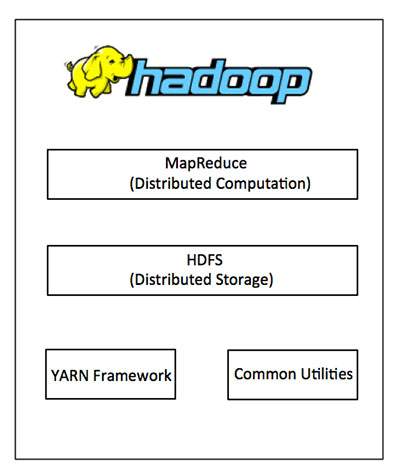

Hadoop framework includes following four modules:

Hadoop Common: These are Java libraries and utilities required by other Hadoop modules. These libraries provides filesystem and OS level abstractions and contains the necessary Java files and scripts required to start Hadoop.

- Hadoop YARN: This is a framework for job scheduling and cluster resource management.

- Hadoop Distributed File System (HDFS™): A distributed file system that provides high-throughput access to application data.

- Hadoop MapReduce: This is YARN-based system for parallel processing of large data sets.

We can use the following diagram to depict these four components available in Hadoop framework.

Since 2012, the term "Hadoop" often refers not just to the base modules mentioned above but also to the collection of additional software packages that can be installed on top of or alongside Hadoop, such as Apache Pig, Apache Hive, Apache HBase, Apache Spark etc.

MapReduce

Hadoop MapReduce is a software framework for easily writing applications which process big amounts of data in-parallel on large clusters (thousands of nodes) of commodity hardware in a reliable, fault-tolerant manner.

The term MapReduce actually refers to the following two different tasks that Hadoop programs perform:

- The Map Task: This is the first task, which takes input data and converts it into a set of data, where individual elements are broken down into tuples (key/value pairs).

- The Reduce Task: This task takes the output from a map task as input and combines those data tuples into a smaller set of tuples. The reduce task is always performed after the map task.

Typically both the input and the output are stored in a file-system. The framework takes care of scheduling tasks, monitoring them and re-executes the failed tasks.

The MapReduce framework consists of a single master JobTracker and one slave TaskTracker per cluster node. The master is responsible for resource management, tracking resource consumption/availability and scheduling the jobs component tasks on the slaves, monitoring them and re-executing the failed tasks. The slaves TaskTracker execute the tasks as directed by the master and provide task-status information to the master periodically.

The JobTracker is a single point of failure for the Hadoop MapReduce service which means if JobTracker goes down, all running jobs are halted.

How Does Hadoop Work?

Stage 1

A user/application can submit a job to the Hadoop (a hadoop job client) for required process by specifying the following items:

- The location of the input and output files in the distributed file system.

- The java classes in the form of jar file containing the implementation of map and reduce functions.

The job configuration by setting different parameters specific to the job.

Stage 2

The Hadoop job client then submits the job (jar/executable etc) and configuration to the JobTracker which then assumes the responsibility of distributing the software/configuration to the slaves, scheduling tasks and monitoring them, providing status and diagnostic information to the job-client.

Stage 3

The TaskTrackers on different nodes execute the task as per MapReduce implementation and output of the reduce function is stored into the output files on the file system.

Advantages of Hadoop

- Hadoop framework allows the user to quickly write and test distributed systems. It is efficient, and it automatic distributes the data and work across the machines and in turn, utilizes the underlying parallelism of the CPU cores.

- Hadoop does not rely on hardware to provide fault-tolerance and high availability (FTHA), rather Hadoop library itself has been designed to detect and handle failures at the application layer.

- Servers can be added or removed from the cluster dynamically and Hadoop continues to operate without interruption.

- Another big advantage of Hadoop is that apart from being open source, it is compatible on all the platforms since it is Java based.

Very nice and important information about Hadoop

ReplyDeleteGood Post! Thank you so much for sharing this pretty post, it was so good to read and useful to improve my knowledge as updated one, keep blogging…

ReplyDeleteHadoop Online Training

Data Science Online Training

Thanks for sharing such great information with about Big Data and Hadoop. It will be helpful for us. Big Data Hadoop Training in Pune

ReplyDeletewonderful blog. thank you for sharing this wonderful content with us hope you continue to fed us with lot of this informative blogs in future too.the information about big data and hadoop helped me a lot

ReplyDeleteCloud Migration Services

AWS Cloud Migration Services

Azure Cloud Migration Services

VMware Cloud Migration Services

Cloud Migration tool

Database Migration Services

Cloud Migration Services

Content on your blog is really informative 50 High Quality for just 50 INR

ReplyDelete2000 Backlink at cheapest

5000 Backlink at cheapest

Boost DA upto 15+ at cheapest

Boost DA upto 25+ at cheapest

Boost DA upto 35+ at cheapest

Boost DA upto 45+ at cheapest

Thanksyou for the valuable content.50 High Quality for just 50 INR

ReplyDelete2000 Backlink at cheapest

5000 Backlink at cheapest

Boost DA upto 15+ at cheapest

Boost DA upto 25+ at cheapest

Boost DA upto 35+ at cheapest

Boost DA upto 45+ at cheapest

Are you interested in doing Data Science Training in Chennai with a Certification Exam? Catch the best features of Data Science training courses with Infycle Technologies, the best Data Science Training & Placement institutes in and around Chennai. Infycle offers the best hands-on training to the students with the revised curriculum to enhance their knowledge. In addition to the Certification & Training, Infycle offers placement classes for personality tests, interview preparation, and mock interviews for clearing the interviews with the best records. To have all it in your hands, dial 7504633633 for a free demo from the experts.

ReplyDelete